Search Engine Submission in 2026: What Actually Works (Hint: Not Submission)

Google retired its standalone "Add URL" submission page in 2018. The replacement, a "Request Indexing" button inside Search Console's URL Inspection tool, exists mostly as a debugging aid, not a formal way to submit site to Google.

Search Engine Submission in 2026: What Actually Works (Hint: Not Submission)

Google retired its standalone "Add URL" submission page in 2018. The replacement, a "Request Indexing" button inside Search Console's URL Inspection tool, exists mostly as a debugging aid, not a formal way to submit site to Google. And yet the entire concept of search engine submission persists in blog posts, agency upsells, and "submit your site to 500 search engines!" services that somehow still charge money. Here's the uncomfortable claim: manually submitting URLs to search engines produces no measurable indexing advantage over doing absolutely nothing, and the time you spend on submission would be better spent on any other SEO activity.

The rest of this article defends that claim with three specific pieces of evidence. If you understand how search engines discover and index pages, you already sense where this is going.

Search Engines Don't Wait for You to Knock

The mental model behind search engine submission assumes that Google, Bing, and others sit passively until you hand them a URL. That model was roughly accurate in 1998. It hasn't been accurate for over two decades.

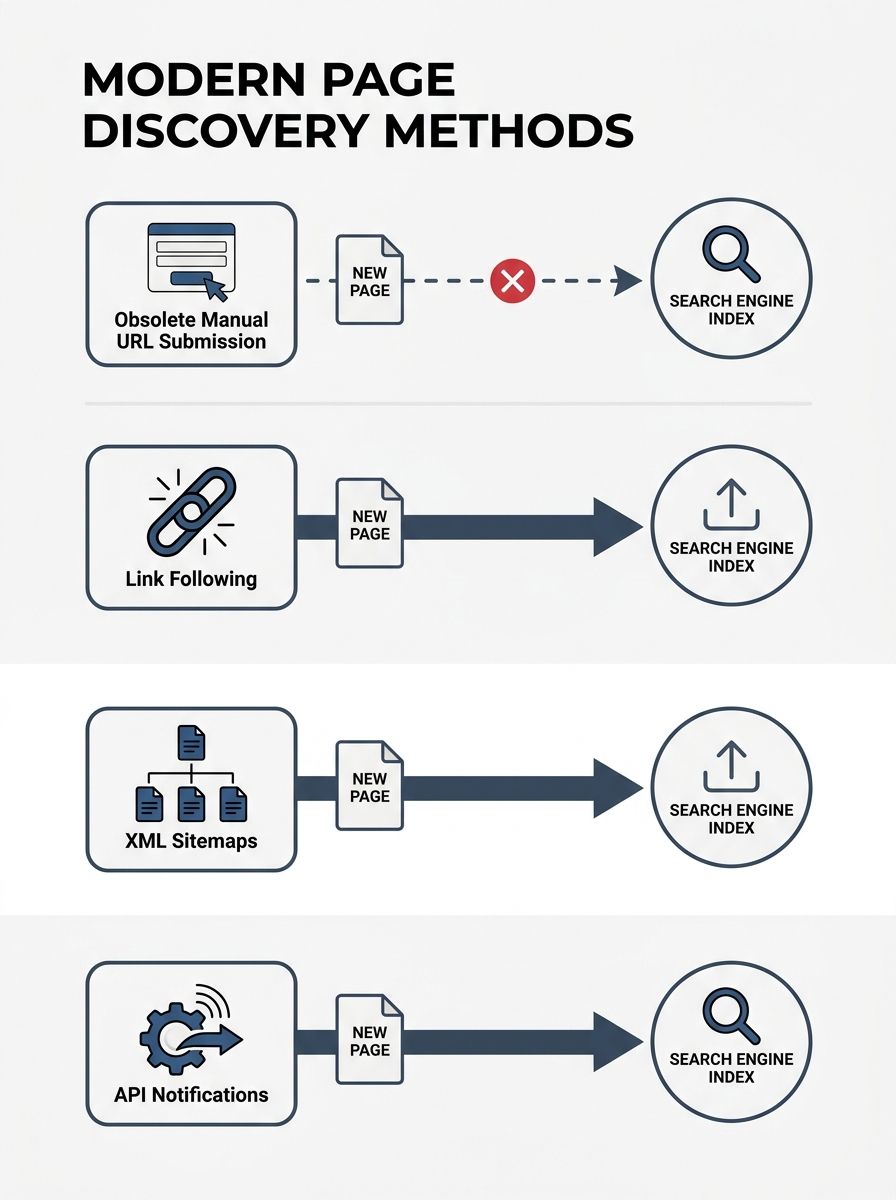

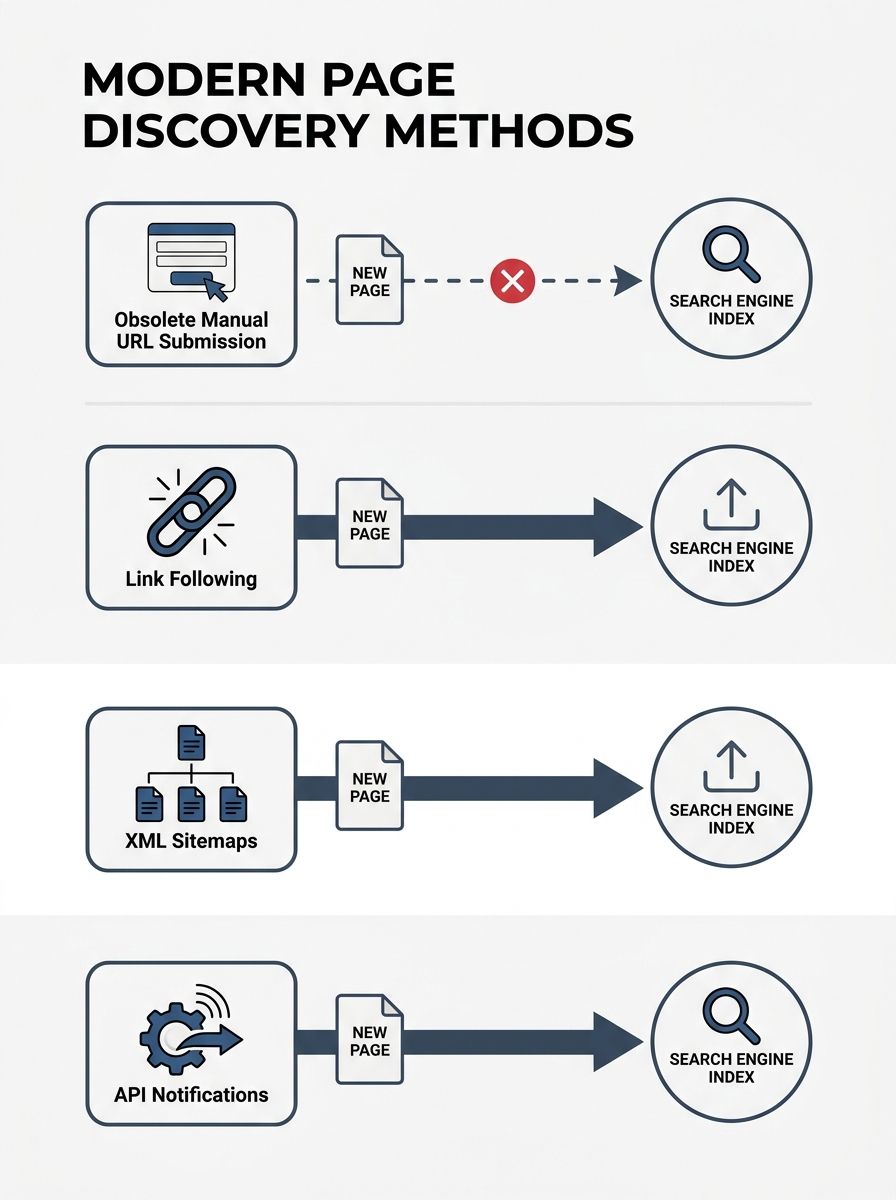

Modern crawlers discover pages through three mechanisms that operate continuously without any manual input from you:

Link following. Googlebot and Bingbot follow links from pages they already know about. If your new page is linked from an existing indexed page (your homepage, your blog index, an external site), crawlers will find it. The average time from publication to crawl for a well-linked page on a healthy site is hours, not days.

XML sitemaps. When you configure sitemap submission in Google Search Console, you're giving crawlers a structured map of every URL on your site. Google's own documentation describes the XML sitemap format as the most versatile way to communicate your site's structure. Bing Webmaster Tools supports the same format. Once submitted, the sitemap is re-crawled automatically on a regular schedule. You set it up once and it updates itself as your CMS adds pages.

API-driven notifications. The IndexNow protocol and the Google Indexing API push URL change notifications to search engines in real time. These are the closest things to active "submission" that exist today, and they're fundamentally different from the old model. More on both below.

None of these three mechanisms require you to visit a form, paste a URL, and click "Submit." The submission step has been automated out of existence, and for good reason: it was always a bottleneck, not a benefit.

The IndexNow Protocol Is a Notification, and Google Still Doesn't Use It

If you've encountered advice to use the IndexNow protocol as a way to get indexed by Google, you should know that Google does not participate in IndexNow. The protocol was launched in October 2021 as a collaboration between Microsoft Bing and Yandex, and it remains primarily a Bing ecosystem tool. Search engines that adopt IndexNow agree to share submitted URLs with all other participating engines, which is a nice efficiency gain for the Bing/Yandex side of the web. But Google has never joined, and there's no public indication it plans to.

What IndexNow actually does is useful, though. When you publish or update a page, a plugin or server-side script pings an endpoint with the changed URL. Bing and other participating engines then know to re-crawl that specific page. For WordPress sites, several plugins handle this automatically with zero configuration. That's a genuine improvement over waiting for Bing's crawler to find changes organically, especially if you're running a news site or e-commerce catalog where freshness matters.

For Bing webmaster submit workflows, IndexNow has essentially replaced manual submission entirely. You configure it once, and every publish event triggers a notification. That's how it should work.

The Google Indexing API is a different tool with a narrower scope. Google restricts it to specific content types, primarily job postings and livestream content with BroadcastEvent markup. If your site publishes standard blog posts, product pages, or informational content, the Google Indexing API isn't available to you. There are alternative approaches that try to work around this restriction, but Google's own guidance is clear: for general content, use sitemaps and rely on natural crawling.

So the two "modern submission" tools that exist either don't cover Google (IndexNow) or don't cover general content (Google Indexing API). For most site owners, the answer to how to get indexed by Google remains boringly simple: publish the page, make sure it's linked from your site's navigation or internal links, confirm your sitemap is registered in Search Console, and wait. The crawl happens on Google's schedule, and for healthy sites with reasonable URL structures, that schedule is fast.

The Indexing Problem Is Almost Never a Submission Problem

Here's where the search engine submission myth causes real damage: when pages aren't getting indexed, people assume the problem is that Google doesn't know about them. They submit the URL manually, wait, submit again, try a third-party indexing service, and eventually conclude that Google is broken or biased against their site.

The actual problem is almost always one of three things:

The content doesn't justify indexing

Google's index isn't a filing cabinet that stores everything it finds. It's a curated collection, and pages that are thin, duplicative, or low-value get discovered by crawlers and then deliberately left out. If you publish a 200-word page that restates information available on 50 other sites, Google will crawl it, evaluate it, and choose not to index it. Submitting the URL again won't change that evaluation. Improving the content will.

This is where on-page optimization actually matters. A page with original depth, clear structure, and genuine expertise signals gives Google a reason to keep it in the index. A page without those qualities gets dropped regardless of how many times you submit it.

The site has technical crawl barriers

Pages blocked by robots.txt, pages that return soft 404s, pages buried behind JavaScript that Googlebot can't render, pages with session ID parameters creating duplicate URLs, pages with noindex tags that someone added during staging and forgot to remove. These are crawlability problems. No amount of URL submission bypasses a noindex directive.

The site lacks inbound signals

A brand-new domain with no backlinks, no social mentions, and no internal linking structure from other indexed pages is hard for crawlers to discover and hard for ranking algorithms to trust. The solution is building genuine authority through white-hat link building and content that earns references, not submitting URLs to a form.

The common thread across all three problems: the fix is always about improving the site, never about improving the submission.

What About Those "Submit to 500 Search Engines" Services?

They're selling something that is free to do yourself (ping a URL) to search engines that either don't exist or don't matter. Bing, Google, Yandex, and a handful of regional engines account for essentially all search traffic globally. You can register with each of their webmaster tools in an afternoon. The remaining "495 search engines" on those lists are directories, meta-search engines, or defunct services.

If you're paying for a submission service, you're paying for a solved problem. The concepts behind it are well-covered in any good SEO glossary, and the actual work takes less time than reading this article.

The Claim, Revisited

Manual search engine submission was a meaningful task in the early 2000s, when crawl coverage was incomplete and search engines genuinely needed help finding pages. That era ended a long time ago. The web in 2026 has mature crawling infrastructure, XML sitemaps processed automatically by every major engine, and real-time notification protocols like IndexNow for engines that support them.

The persistent advice to "submit your site to Google" survives because it feels productive. Pasting a URL into a form and clicking a button gives you the satisfying sense that you've done something. But the evidence is clear: the pages that get indexed quickly are pages that are well-linked, technically sound, and valuable enough to justify their place in the index. The pages that sit in the "Discovered - currently not indexed" limbo in Search Console almost always have a quality or technical problem that no submission will fix.

If you're spending time on search engine submission, redirect that time toward the things that actually determine whether your pages get indexed and ranked. Write content with original depth. Fix your crawl errors. Build your sitemap correctly and register it in Search Console and Bing Webmaster Tools. Set up IndexNow for Bing coverage. And then let the crawlers do what they've been doing automatically for the better part of two decades. The submission part was always the least important step in the process, and today it's not really a step at all.

OrganicSEO.org Editorial

Editorial team writing about Ethical, white-hat, organic SEO education.

Frequently Asked Questions

- Do I need to manually submit my website to Google?

- No. Google automatically discovers pages through link following, XML sitemaps, and crawling. Manual URL submission produces no measurable indexing advantage, and Google retired its standalone submission page in 2018. The time spent on submission is better spent on other SEO activities.

- How do search engines find new pages without submission?

- Search engines discover pages through three mechanisms: following links from already-indexed pages, crawling XML sitemaps automatically, and real-time notifications via protocols like IndexNow. Well-linked pages on healthy sites are typically crawled within hours of publication.

- Does Google support the IndexNow protocol?

- No. Google does not participate in IndexNow, which is a collaboration between Microsoft Bing and Yandex launched in 2021. IndexNow is useful for Bing coverage but does not help with Google indexing.

- Why aren't my pages being indexed by Google?

- Indexing problems are almost never submission problems. Common causes include thin or duplicative content that doesn't justify indexing, technical crawl barriers like robots.txt blocks or noindex tags, or lack of inbound signals and internal links. Check Search Console's URL Inspection tool to diagnose the specific issue.

- What should I do instead of submitting URLs to search engines?

- Focus on writing original, valuable content with clear structure, fix any crawl errors, set up and register your XML sitemap in Search Console and Bing Webmaster Tools, and configure IndexNow for Bing coverage. These activities directly impact indexing far more than submission.

- Are 'submit to 500 search engines' services worth paying for?

- No. These services charge money for free work that takes an afternoon to do yourself. The major search engines that matter are Google, Bing, Yandex, and a few regional engines. The remaining 495 on these lists are directories, meta-search engines, or defunct services with no meaningful traffic.

- What is the Google Indexing API and who can use it?

- The Google Indexing API is restricted to specific content types, primarily job postings and livestream content with BroadcastEvent markup. For standard blog posts and informational content, it's not available, and Google recommends using sitemaps and natural crawling instead.